Automating your privacy operations by integrating MineOS with Google BigQuery

This integration allows you to:

- Automate Data Subject Requests (DSR) for user data from Google BigQuery.

- Preview and search user data in BigQuery.

- Perform data classification to detect data types stored in your BigQuery tables.

Before you start

- Make sure your MineOS plan supports integrations.

- Make sure you have access to Google Cloud Platform and Google BigQuery.

Setting up

To connect the BigQuery integration, follow these steps:

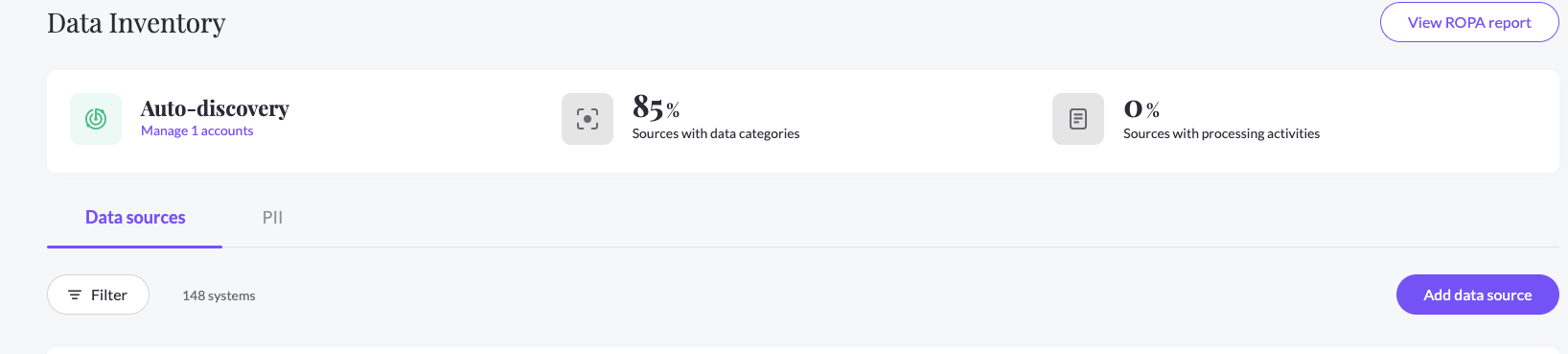

- On the left sidebar, click Data Inventory and then Data Sources

- Click on Add data source

- Select Google Big Query from the catalog, then enter the Google Big Query page from your data sources list

- In the RequestHandling tab, check the relevant checkboxes and choose the Integration handling style:

- Handle this data source in privacy requests - To use the integration in handling Data Subject Requests (DSR)

- Scan this source using Data Classifier - To use the integration in preforming Data Classification and scanning for PII data types

- Select your Integration Type:

- Big Query Tables (For connecting with OAuth)

- Click "Connect" and follow the on-screen instructions to grant the required permissions.

- Some organizations have a session timeout enabled for Google Cloud scopes. To avoid session timeout for this integration, you need to mark it as a Trusted App, as well as exempt trusted apps from session timeout. Refer to Google's article for help.

- Note: failing to do so will expire this integration and you will have to reconnect it every time.

- Enter your Google Cloud Project ID, click Save

- BigQuery Tables - Service Account

Instructions

Step 1

- Open IAM and Admin on your Google Cloud Console

- From the top bar, choose the organization you’d like to scan

Step 2

- On the IAM page, click Grant access

- Copy your Principal account and past it into New Principal in GCP:

Step 3

- Under Assign roles, add the required role:

-

If using the integration for DSR automation, you will need to add the BigQuery Admin role.

- If using the integration only for Content Discovery, you can add the following roles: BigQuery Data Viewer, BigQuery Job User

- Enter your Google Cloud Project ID, click Test And Save

-

* Note - If you receive an Authentication Error, make sure that you have added the right roles for the principal.

- Big Query Tables (For connecting with OAuth)

Content Discovery

- When using Content Discovery for your Google BigQuery Database, you need to make sure you have given the Google BigQuery OAuth user the required permissions to your relevant data.

- In the RequestHandling tab, make sure to check the relevant checkbox- Scan this source using Data Classifier, To use the integration in preforming Data Classification and scanning for PII data types.

- Choose the wanted Integration Type- 'BigQuery Tables/BigQuery Tables - Service Account' to scan your tables, or 'BigQuery Views/ Big Query Views - Service Account' in case you want to scan the Views in your DB instead of the tables.

- Choose 'Connect' to connect your Google BigQuery OAuth Account to MineOS for OAuth and allow the app to read your DB content. for Service Account, click Test And Save,

- After the connection is successful, click on the 'Save' button, and go to the Data Classification pane below and select 'Start Scan' with the desired confidence level.

- Upon content discovery, MineOS will scan your data, and analyze each table's data in our PII Processing Engine. These scan results will be added to the data types of your integration general info (according to the likelihood of the data type found).

Optional: Defining queries for DSR flow

To manage your DSR handling actions, you need to define the queries that will run in Big Query for each possible action:

Note: The queries you use won't be validated. You should run them in your BigQuery account to make sure they are running as expected

Optional: Preview Query

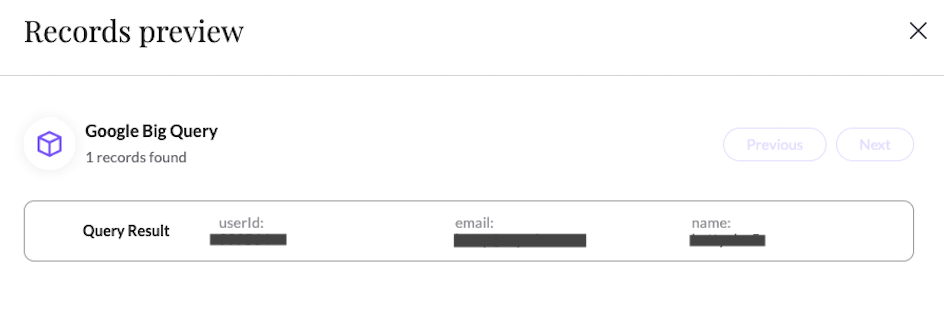

The Preview query will be used when opening the ticket processing screen and is responsible for showing how many records were found as well as showing a sample (preview) of the data.

Example Query:

SELECT name, age, country FROM `dataset.table` WHERE email = {{endUserEmail}}

* Preview will return the count of the number of records returned in the query response

* Preview will show the first 3 values from the query response, it supports strings and long types

* The variable is mandatory

Optional: Copy Query

The Copy query will be used for the Copy action. Copy action is running on the ticket processing page in ticket of type Copy when clicking on Generate Copy

Example Query:

SELECT * FROM `dataset.table` WHERE email = {{endUserEmail}}

* Copy will show all records returned in the query response

* The variable is mandatory

Required: Delete Query

The Delete query will be used for the Delete action. Delete action is running on the ticket processing page in ticket of type Deletion when clicking on Delete from X sources

Example Query:

DELETE FROM `dataset.table` WHERE email = {{endUserEmail}}

* The variable is mandatory

Paste the project id and queries in the Request handling tab in the correct inputs and click Save

Working with custom identifiers

If you wish to use this integration with a user identifier that is not email, you will need to provide it for each request by making an UpdateMetadata API call.

When making the API call, make sure to use the following field name: $googlebq

"customFields": {

"$googlebq": "1234567"

}

You can use any value you like, and it will be used to replace the {endUserName} parameter when running queries.

Note: you still need to use the {endUserName} parameter in queries.

Permissions required to use this integration

Mine will request the following BigQuery permissions when you activate this integration:

| Scope | Description |

| ./auth/bigquery |

View and manage your data in Google BigQuery and see the email address for your Google Account

|

Current Limitations

- BigQuery data classification does not support scanning partitioned tables.

What's next?

Read more about the deletion process using integrations here.

Read more about the get a copy process using integrations here.

Talk to us if you need any help with Integrations via our chat or at portal@saymine.com, and we'll be happy to assist!🙂